ImageNet Classification with Deep Convolutional Neural Networks

Architecture:

- 8 layers deep (5 convolutional layers, 3 fully connected layers)

- Used ReLU activation functions

- Implemented dropout for regularisation

- Employed data augmentation techniques

How many layers does AlexNet have in total?

1. 8 layers - 5 convolutional and 3 fully connected

What type of neural network is AlexNet (e.g., feedforward, recurrent, convolutional)?

1. Convolutional

What activation function did AlexNet popularize?

1. ReLU

Name one regularization technique used in AlexNet to prevent overfitting.

1. Dropout

Intermediate Level:

Explain the significance of AlexNet in the context of the deep learning revolution.

- Made CNN popular, introduced ReLU, Dropout.

ow did AlexNet utilize GPUs, and why was this important?

- It divided the work into two gpus which utilised parallelization.

Describe the purpose and mechanism of the dropout technique used in AlexNet.

- It was used for regularisation.

How did AlexNet's use of ReLU activation functions contribute to its success?

- Deep convolutional neural networks with ReLUs train several times faster than their equivalents with tanh units

Advanced Level:

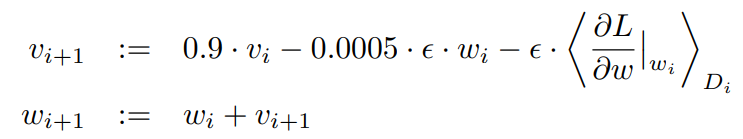

Explain the update rule used in AlexNet's training process, including the role of momentum and weight decay.

is iteration index, is momentum variable, is learning rate

[1] is the average over the ith batch of the derivative of the objective with respect to w, evaluated at .

- How did the splitting of the network across two GPUs affect the architecture and training process?

- Discuss the implications of visualizing the learned features of AlexNet's first convolutional layer.

- Compare and contrast AlexNet's approach to data augmentation with more modern techniques.